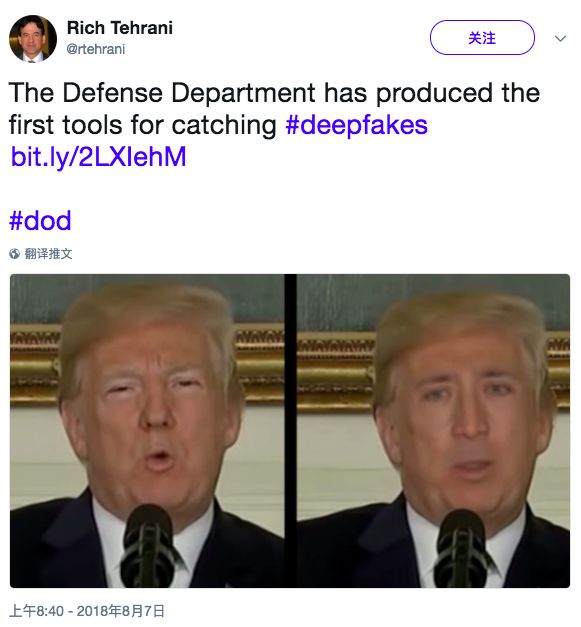

The US Department of Defense has developed the world's first "anti-AI face-changing criminal detection tool", which is dedicated to detecting AI face-changing/face-changing fraud technology. Nowadays, AI face-changing technology represented by GAN is popular, and the corresponding face detection and recognition technology has to be improved. This is just the beginning of a long and wonderful AI arms race.

Yesterday, Xin Zhiyuan introduced the real-time 3D face changing technology of the Pinscreen team led by Professor Li Hao of the University of Southern California, which aroused many readers' concerns.

The real-time face changing technology of Li Hao's team: the left is the image taken by the iPhone, and the right is the real-time generated 3D face. Source: fxguide.com

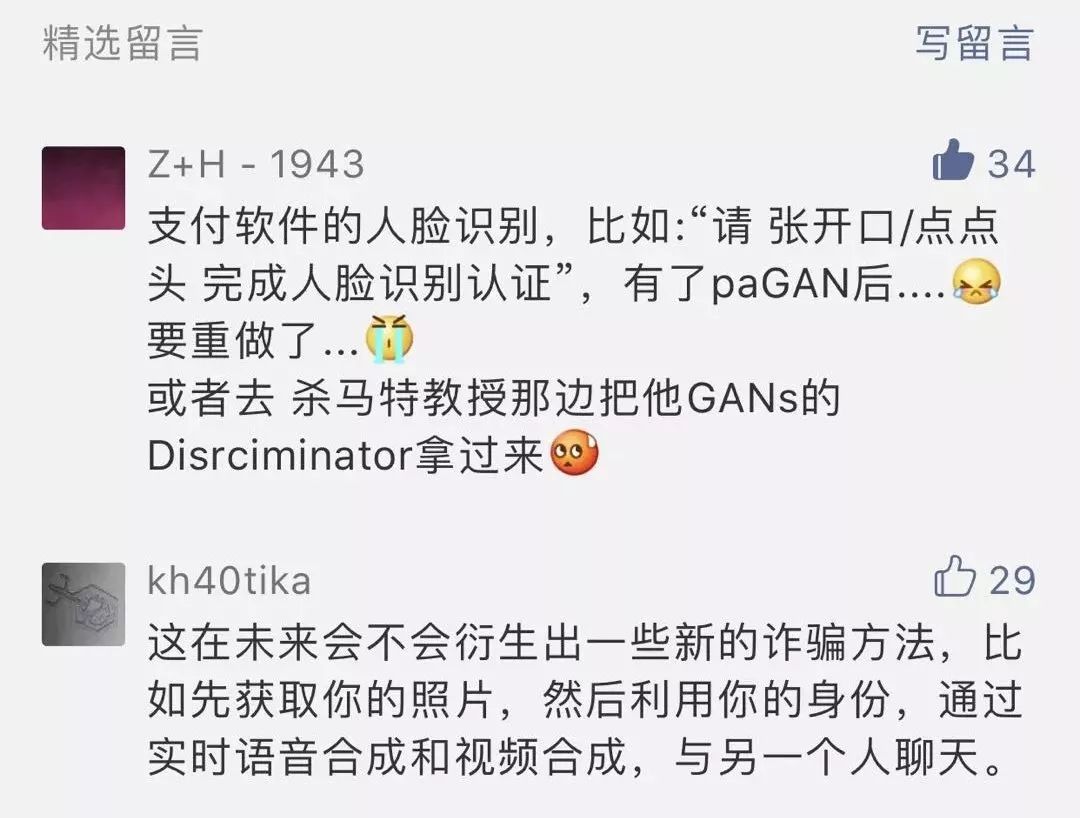

Some people worry that Alipay’s face recognition technology will fail, or a new method of fraud will be derived. The offender will use your picture to pretend to be you and chat with another person...

These worries are real, because today's "AI face change technique" has reached a state of ecstasy. Anyone using AI software can almost imitate the face of a politician. If someone has certain skills, he can still do it. To the extent that it is difficult to distinguish between true and false:

These AI face-changing tools are actually derived from the powerful image generation capabilities of the Generative Adversarial Network (GAN).

However, now DAPRA, a research organization of the US Department of Defense, has developed the first "anti-face" AI criminal detection tool, and the principle is to use AI to attack AI.

This AI anti-face-changing criminal investigation tool is part of the DARPAMediaForensics project. As early as May of this year, DARPA put forward the need to develop AI face-changing technology to automate the existing criminal detection tools that can detect AI fake faces that have recently emerged.

Matthew Turek, head of the DARPAMediaForensics project, said that they found some subtle clues in the fake faces generated by GAN, which can detect whether the face in the image or video is real or generated by AI.

I still remember that in 2016, the "3.15 Evening Party" let a photo "deceive" the facial recognition software, which made the "face recognition" popular overnight.

Nowadays, more advanced AI face-changing technology and AI face-changing detection technology will also start a long-term arduous but also wonderful AI arms race.

At the "3·15" party in 2016, the host used an AI technology to convert static photos into dynamic photos, thereby deceiving the login system. This technology can be achieved on mobile phones

It depends on who can go better and faster.

The US Department of Defense has developed the first "anti-AI face-changing" criminal investigation tool: the accuracy rate is as high as 99%

This DARPA tool is mainly based on the joint discovery of Professor Siwei Lyu of the State University of New York at Albany and his students YuezunLi and Ming-ChingChang, that is, fake faces (generally known as DeepFake) generated using AI technology, rarely or even blink. Because they are all trained using photos with open eyes.

"Because most of the training data sets do not contain face images with closed eyes, the faces generated by AI lack the blinking function," Lyu said: "Therefore, the lack of blinking is a good way to determine whether a video is true or false."

The paper detailed how they combined the two neural networks to more effectively expose which videos were synthesized by AI. These videos often ignore "spontaneous, unconscious physiological activities, such as breathing, pulse, and eye movements."

By effectively predicting the state of the eyes, the accuracy rate reaches 99%.

"We also need to explore other deep neural network architectures in order to detect closed eyes more effectively," Lyu added: "Our current method only uses the lack of blinking as a cue to detect AI tampering. However, dynamic blinking patterns should also be considered — -Blinking too quickly or frequently, this physiologically unlikely phenomenon should also be regarded as a sign of tampering."

A team of UAlbany researchers used blink detection on the original video (above) and fake video generated by DeepFake (below) to determine whether the video was faked by AI. In the original video, blinking was detected within 6 seconds. Source: UAlbany.edu

Others participating in the DARPA Challenge are also exploring similar techniques, such as automatically detecting strange head movements, strange eye colors, and so on.

Hany Farid, a digital forensics expert at Dartmouth University, said that current AI anti-face tools mainly use such physiological signals, at least so far these signals are difficult to imitate.

The introduction of these AI criminal investigation evidence collection tools only marked the beginning of an AI arms race between AI video forgers and digital criminal investigators.

HanyFarid said that a key issue at present is that machine learning systems can receive more advanced training and then surpass the current anti-face tools.

DARPA "Anti-AI Face-changing Project": Ensure the detection of the most advanced AI fraud technology

The MediaForensics project funded by DARPA aims to successfully identify fake pictures and videos generated by machine learning algorithms. Researchers are trying to develop an extensible platform tool to identify fake videos and images generated by "Deepfake", especially based on the GAN model.

Deepfake is often used to generate spoof videos or clips of some celebrities or politicians, but it may also be used to maliciously spread false information to achieve dangerous purposes such as inciting and creating confusion.

Detecting the authenticity of digital content usually involves three steps:

The first is to check whether there are signs of two images or videos spliced ​​together in the digital file;

The second is to check the physical properties of the image, such as the illuminance, and look for signs of possible problems;

The third step, which is the most difficult to complete automatically, and possibly the most difficult, is to check whether the image or video content is logically contradictory. For example, the image shows that the weather on the shooting date does not match the actual weather, or there is a problem with the background of the shooting location.

At present, tools or applications for distinguishing a large number of digital content that are difficult to distinguish between true and false still lack wide applicability. In the important application directions involving forensics, evidence analysis and identification, the scalability and robustness of such tools Neither sex can fully meet the needs in practice.

DARPA's MediFor project brings together world-class researchers to try to develop technologies that can automatically assess the integrity of images or videos and integrate these technologies on an end-to-end platform. If the project is successful, the MediFor platform will be able to automatically detect the changes to the image or video, and give detailed information on how the specific changes were made, and the reason for determining whether the video is complete, so as to determine whether the suspicious image or video can be removed. Use as evidence.

DARPA project leader David Gunning said: "In theory, if you use all the current technology to detect the false results generated by GAN, it can learn to bypass these detection techniques." However, the researchers found when they were fighting Deepfake. An important weakness is that the person in the fake video it generates never blinks, which is an important feature. This is because the training models used by Deepfake to generate fake videos are still pictures, and people always keep their eyes open when taking pictures.

DARPA researchers said the agency will continue to conduct more tests to "ensure that the identification technology under development can detect the latest counterfeiting technology."

From Deepfake to HeadOn: A brief history of the development of face-changing technology

DAPAR's concerns are not groundless. Today's face-changing technology has reached a point where it threatens security. At first, it might be to get Trump and Putin to express political views; but later, DeepFake appeared, allowing ordinary people to use this technology to create fake pornographic videos and fake news. The technology is becoming more and more advanced, so that AI security also creates hidden dangers.

1. Deepfake

Let’s take a look at the sacred Deepfake.

Deepfake, a combination of "deeplearning" and "fake", is a character image synthesis technology based on deep learning. It can combine and superimpose any existing image and video onto the source image and video.

Deepfake allows people to use simple videos and open source code to create fake pornographic videos, fake news, malicious content, etc. Later, deepfakes also launched a desktop application called FakeAPP, which allows users to easily create and share face-changing videos, further reducing the technical threshold to the C-side.

Trump's face was changed to Hillary Clinton

Due to its malicious use caused a lot of criticism, Deepfake has been blocked by websites such as Reddit and Twitter.

2. Face2Face

Face2Face is also a "face changing" technology that has caused great controversy. It appeared earlier than Deepfake and was released in CVPR2016 by the team of JustusThies, a scientist at the University of Nuremberg in Germany. This technology can perfectly copy one person's facial expression, facial muscle changes when speaking, and mouth shape to another person's face in real time. Its effects are as follows:

Face2Face is considered to be the first model that can perform face conversion in real time, and its accuracy and realism are much higher than previous models.

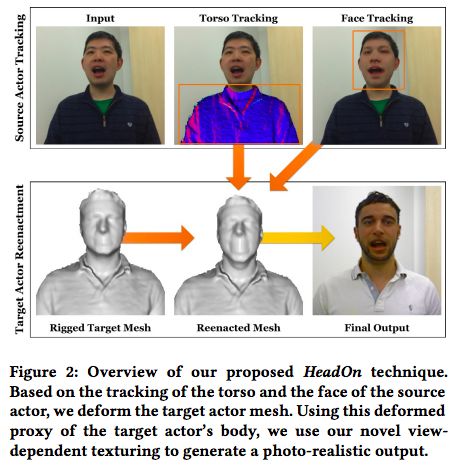

3. HeadOn

HeadOn can be said to be an upgraded version of Face2Face, created by the original Face2Face team. The work done by the research team on Face2Face provides a framework for most of HeadOn’s capabilities, but Face2Face can only achieve the transformation of facial expressions, and HeadOn increases the migration of body and head movements.

In other words, HeadOn can not only "change the face", it can also "change the person"—according to the input character's actions, it can change the facial expressions, eye movements and body movements of the characters in the video in real time, so that the person in the image looks like It's really talking and moving.

Illustration of HeadOn technology

In the paper, the researchers called this system "a real-time source-to-target replay method of the first human portrait video, which realizes the migration of trunk movements, head movements, facial expressions, and gaze."

4. DeepVideoPortraits

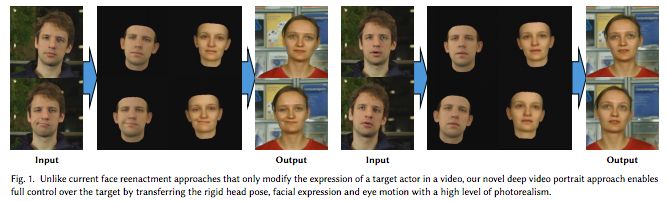

DeepVideoPortraits is a paper submitted by researchers from Stanford University, Technical University of Munich, etc. to the SIGGRAPH conference in August this year. It describes an improved "face-changing" technology that can use one person's face to reproduce another person's face in a video. Body movements, facial expressions and mouth shapes.

For example, replace the face of an ordinary person with the face of Obama. DeepVideoPortraits can learn the elements of the face, eyebrows, corners of the mouth, and background and their motion forms through a video of the target person (in this case, Obama).

5. paGAN: Use a single photo to generate super-realistic animated character avatars in real time

The latest "face changing" technology that has caused great repercussions comes from the team of Chinese professor Li Hao. They developed a new machine learning technology paGAN, which can track human faces at a speed of 1000 frames per second, using a single photo Real-time generation of super-realistic animated portraits, the paper has been accepted by SIGGRAPH2018.

Pinscreen took a photo of "Los Angeles Times" reporter David Pierson as input (left) and made his 3D profile picture (right). This generated 3D face generates expressions through Li Hao's actions (middle). This video was made 6 months ago, and the Pinscreen team said it had already surpassed the above results internally.

Electronic Wire Harness,Double-Ended Terminal Wires,Quick Terminal Wire,Color Plate Wire

Dongguan ZhiChuangXing Electronics Co., LTD , https://www.zcxelectronics.com